What motivates Yates Coley’s work to build equitable prediction models?

Yates Coley on a backpacking trip in Washington State

Coley's work contributes to improved quality of care and better understanding of patients’ needs

Yates Coley, PhD, associate investigator at Kaiser Permanente Washington Health Research Institute (KPWHRI), recently received an Emerging Leader Award from the Committee of Presidents of Statistical Societies. The award recognizes early-career statistical scientists who are leaders in their field and called out Coley’s impactful contributions to learning health systems science and the ethical development of clinical prediction models — tools that calculate the risk of an outcome based on a set of patient characteristics. The award also cited Coley’s leadership and advocacy to advance justice, equity, diversity, and inclusion.

In addition to their associate investigator role with KPWHRI, Coley leads the advanced analytics core for the Center for Accelerating Care Transformation (ACT Center), a learning health systems partnership between KPWHRI and Kaiser Permanente Washington.

How did you get started in your field?

I joke that I chose to study biostatistics as a way to not choose a research area. After majoring in environmental science and policy as an undergraduate, I was interested in so many facets of public health, environmental justice, and clinical care that it seemed impossible to pick just one area to focus on. Then I realized that everyone needs a statistician and, since I was pretty good at math, that statistician could be me. I loved my statistics class and how it combined critical thinking with more “objective” mathematical procedures. Statistics is a tool that can be applied to any research question. As a biostatistician, I get to collaborate across so many content areas.

How have your research interests evolved?

I first started working on clinical prediction models as a postdoctoral research fellow at Johns Hopkins University. There, I collaborated with urologists to develop a prediction model to guide active surveillance for people diagnosed with prostate cancer. It was so exciting to work on something that was going to be used immediately to improve care!

Since then, much of my independent research has focused on developing and deploying clinical prediction models. That project also sparked my interest in learning health systems, which is the idea that health care organizations can continuously and iteratively use data and evidence to improve care delivery. Integration of patient data into prediction models to guide decision-making is just one example of how data-driven tools can be incorporated into clinical practice and health care operations to improve the quality of care.

Why is Kaiser Permanente a good home for your research?

For one thing, we have remarkable data resources to inform research. As an integrated health care organization, Kaiser Permanente has data on care provided at our facilities and claims data for all care received outside of our facilities. This is a statistician’s dream and enables more patient-centered research — because we have such a complete picture of a person’s health, we are able to conduct analyses or create prediction models that take into account more detailed information.

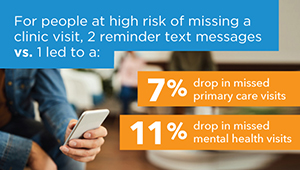

Another benefit of doing work here is having the opportunity to deepen my experience and expertise in learning health systems through both the CATALyST K12 training program and my role with the ACT Center. Through CATALyST, I received mentoring to develop as a statistician doing embedded research at Kaiser Permanente Washington, including gaining the knowledge and skills needed to partner with non-researcher clinical leaders. I also learned more about the clinical data resources available to inform care delivery in real time. With the ACT Center, I have contributed to many projects within Kaiser Permanente Washington to improve operations and care delivery, including a prediction model that is helping clinics reduce missed visits and another model that is used in urgent care for early detection and treatment of sepsis.

What are some current projects you’re excited about?

I am incredibly excited about my research project grant from the National Institute of Mental Health to develop statistical methods to reduce racial and ethnic disparities in suicide prediction models. I am privileged to be able to bring my statistical expertise to address concerns of racial justice and health equity. This work was motivated by research I did while in the CATALyST program and in collaboration with the Mental Health Research Network, which identified poor performance of several suicide prediction models for Black, American Indian, and Alaskan Native patients. Systemic racism and other institutionalized barriers to receiving care impact the quality of electronic health record data used for estimating prediction models, which means that we have to be very thoughtful in their design and evaluation.

In addition to the opportunity to improve racial equity in suicide prevention, this project also has several interesting statistical opportunities. In particular, we are conducting simulations to ensure that we are able to accurately estimate how well a risk prediction model will perform for a new group of patients. This can be challenging statistically for rare events (like suicide) or for less prevalent racial and ethnic groups (when we don’t have many observations) but is really important for having confidence that we can detect when a prediction model may be performing differently across racial and ethnic groups (and, thus, could have disparate impacts).

I’m also excited to keep working with clinicians to guide implementation of prediction models in clinical care. Prediction models are a powerful tool, but it’s important to understand their limitations and how they should be used, as well as ways they can be misused. Equal performance across race and ethnicity is necessary but not sufficient to ensure prediction models have an equitable impact — implementation has to also include equal access to effective, patient-centered interventions.

What do you like to do outside of work?

I have 2 dogs, a shepherd/hound mix named Zoe and a pug named Mo. I enjoy running and backpacking.

Research

Dementia risk screening tool shows promise

In a new study, a tool to help discover undiagnosed dementia performed well in 2 separate health systems.

From research to practice

Predicting and preventing missed clinic visits

Biostatistician Yates Coley reports on new predictive analytics work that’s decreasing missed visits at KP Washington.

News

Examining racial inequity in suicide prediction models

Kaiser Permanente researchers stress need to test how prediction models perform in all racial, ethnic groups.

New findings

Simpler models to identify suicide risk perform similarly to more complex ones

Models that are easier to explain, use could have better uptake in health care settings.